Key Takeaways

- The enterprise AI debate has moved on. The question is no longer whether to adopt agentic AI — it is how to operate it reliably at scale.

- Multi-agent orchestration is the new competitive moat: coordinating specialized agents across workflows is harder than building them.

- Governance, data quality, and audit infrastructure must come before scale — not after.

- Data quality is the silent failure point. Agents running on dirty data produce confident wrong outputs, not just bad ones.

- Beam Data AI Hub provides the data foundation layer that makes orchestration trustworthy and scalable.

In This Article

– Has Enterprise AI Crossed the Tipping Point?

– From Single Agents to Enterprise-Scale Orchestration

– What Is Breaking in Enterprise Deployments Right Now

– The Governance Layer: Build the Control Framework First

– What the Agentic Enterprise Looks Like in Practice

– How Beam Data AI Hub Fits Into Your Agentic Architecture

– FAQ

Has Enterprise AI Crossed the Tipping Point?

Multi-agent orchestration is the coordination layer that manages multiple specialized AI agents working together across enterprise workflows — routing tasks between specialists, enforcing guardrails, managing shared memory, and tracking outcomes end-to-end. It is what transforms a capable single agent into a reliable enterprise system.

Something shifted in Q1 2026. At Nexus 2026, more than 1,000 enterprise leaders arrived not to be convinced about agentic AI — they arrived to compare notes on what they had already built and, more often than not, what had failed. The tone was not evangelism. It was engineering.

The ‘figuring it out’ phase is closing. Gartner projects that 40% of enterprise applications will feature task-specific AI agents by end of 2026, up from fewer than 5% in 2025. That is not a gradual curve. That is a step change.

If you read our last piece on agentic workflows and what they mean for prompt engineering, this is the sequel — not the definition of what agentic AI is, but what it takes to run it reliably across a real enterprise.

The organizations pulling ahead are not the ones who adopted AI earliest. They are the ones who built the governance, data infrastructure, and orchestration layer first. That infrastructure advantage compounds.

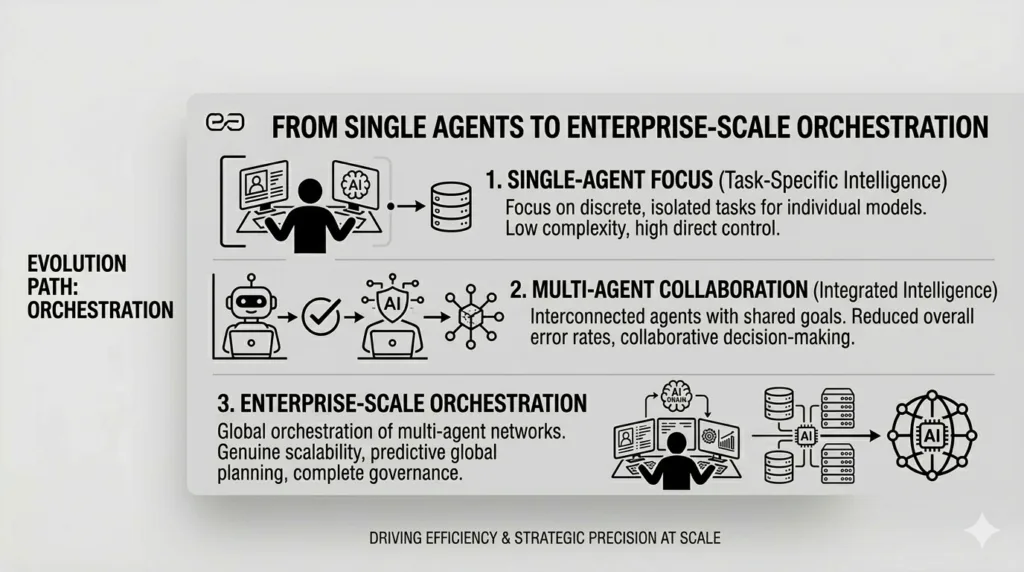

From Single Agents to Enterprise-Scale Orchestration

A single well-designed agent can handle a discrete task with impressive accuracy. Ask it to summarize a document, generate a report, or classify an incoming ticket — it performs. But real enterprise workflows are not discrete tasks. They span multiple systems, data sources, teams, and decision points, often simultaneously.

This is where single-agent architectures hit their ceiling, and where orchestration becomes the actual challenge.

Orchestration is the control layer that coordinates agent lifecycles — routing tasks between specialists, managing shared memory, enforcing guardrails, handling failures, and tracking outcomes across the full workflow. As IBM’s Chris Hay put it at a recent industry event: the next frontier is not building better individual agents, it is coordinating networks of them reliably.

Three orchestration patterns dominate enterprise deployments:

- Sequential — the output of one agent feeds directly into the next, building context step by step.

- Parallel — multiple agents work simultaneously on sub-tasks, with results merged by an orchestrator.

- Hierarchical — a master orchestrator manages a team of specialist sub-agents, each fine-tuned for a specific domain.

Most real enterprise implementations combine all three. Designing how agents hand off work, resolve conflicts, and escalate to humans is fast becoming a foundational skill for every technology and data leader.

What Is Breaking in Enterprise Deployments Right Now

The industry is not waiting for standards to settle. Several significant developments from early 2026 signal where the market is heading with developing agentic ai enterprise news— and what the real friction points are.

NVIDIA’s open agent infrastructure

At GTC 2026, NVIDIA announced the Agent Toolkit and OpenShell — an open-source runtime that enforces policy-based security, network, and privacy guardrails for autonomous agents. Jensen Huang’s framing was deliberate: enterprise software is evolving into a layer of specialized agentic platforms, and the infrastructure holding them together determines whether they can be trusted.

Oracle’s data-native approach

At Oracle AI World Tour London in March 2026, Oracle unveiled the AI Database Private Agent Factory — a no-code agent builder architected directly into the database layer. The design intent is clear: agents should access real-time enterprise data without it ever leaving the security boundary.

Identity and shadow agents

Okta’s 2026 blueprint for the secure agentic enterprise surfaced a challenge that most CIOs are not yet prepared for: shadow agents — unsanctioned AI tools built by employees outside IT oversight. Okta framed three non-negotiable questions every organization must answer: Where are my agents? What can they connect to? What are they authorized to do?

These are not theoretical concerns. They are live governance gaps in most enterprises today.

The Governance Layer: Build the Control Framework First

The clearest pattern from the enterprises that succeeded at Nexus 2026 — Lufthansa, Schwarz Group, Fabletics among them — was sequencing. They made governance and architecture decisions before scaling, not after.

The starting point is not tooling. It is mapping your workflows to the right level of autonomy:

- Human-in-the-loop — agent recommends, human approves each step. Appropriate for high-stakes or novel decisions.

- Human-on-the-loop — agent executes, human monitors with override capability. Right for well-defined processes with exception handling.

- Fully autonomous — agent executes end-to-end, telemetry only. Reserved for well-governed, low-risk, high-volume workflows.

Beyond autonomy levels, production-grade agentic systems need verifiable audit trails for every agent action. When an AI agent makes a poor call — and eventually one will — the ability to reconstruct exactly what happened, what data it accessed, and which decisions it made is both a legal requirement and an operational necessity. Build this into architecture from day one, not as a retrofit.

The Data Foundation Problem (And Why It Breaks Everything Else)

Governance frameworks and orchestration patterns both assume one thing: that the data agents are working with is clean, structured, and trustworthy. In most enterprises, it is not.

According to Reltio’s 2025 data quality research, between 50 and 80 percent of data scientists’ time is consumed by data preparation before any analysis or AI work begins.. Agentic systems do not make this problem smaller — they amplify it. An agent running on fragmented or inconsistent data does not produce hedged or uncertain outputs. It produces confident, wrong outputs, at the speed and scale of automation.

Three failure modes appear consistently in enterprise agentic deployments:

- Hallucination amplification — agents extrapolate from bad data and present the result with authority.

- Context collapse — poor data structure causes agents to lose thread across multi-step tasks, producing incoherent outcomes.

- Pipeline poisoning — a bad upstream data source corrupts every downstream agent that depends on it.

What ‘agent-ready data’ actually requires is deliberate architecture: clean pipelines, governed access, structured context, provenance tagging, and real-time API accessibility. This is not standard enterprise data infrastructure. It requires intentional investment.

These failure modes are not edge cases — they are the default outcome when agents are deployed without a governed data foundation. At Beam Data, we see this consistently across enterprise engagements: orchestration architecture gets prioritized, data infrastructure gets deferred, and the agents pay the price.

Beam Data AI Hub is purpose-built to close that gap. AI Hub provides the governed data foundation layer — clean pipelines, structured enterprise context, and secure access controls — so the agents and orchestration systems built on top of it are working from a trusted source of truth, not guessing from noise.

As IBM Research describes it, the next evolution is self-aware enterprise data systems — agents that continuously scan, profile, and index enterprise knowledge across semantic dimensions. The organizations building clean data infrastructure today will be the first to unlock that capability.

What the Agentic Enterprise Looks Like in Practice

This is not a future state. Enterprises are running multi-agent systems in production today.

Lufthansa Group’s deployment — referenced at Nexus 2026 — represents one of the largest conversational agentic AI implementations globally. Human agents are now reserved for judgment-intensive moments. Structured, high-volume interactions are handled end-to-end by the agentic layer.

Schwarz Group, the parent company of Lidl and Kaufland, is building what it describes as Europe’s largest agentic AI workforce — navigating data sovereignty requirements and multi-agent coordination across a continent-spanning retail operation.

In engineering teams, the role is shifting. Engineers in 2026 spend less time writing code and more time orchestrating agents: defining objectives, reviewing outputs, validating alignment with business intent. The skill is moving from execution to direction.

The ‘agentic department’ is emerging as an organizational pattern — functions where agents handle the majority of repetitive workflow volume, with human expertise focused on exceptions, edge cases, and final approvals.

How Beam Data AI Hub Fits Into Your Agentic Architecture

Every enterprise agentic deployment has three layers: a data foundation, an orchestration layer, and the agent workforce. Most platform vendors compete at the orchestration or agent layer. Beam Data AI Hub is purpose-built for the foundation — the layer everything else depends on.

AI Hub unifies enterprise data for agent consumption: clean pipelines, a single governed access layer, structured context, and secure API access — so specialist agents across your organization are all working from the same trusted source of truth, not from siloed, inconsistent extracts.

As enterprises scale from one or two agents to dozens or hundreds, data access becomes chaotic. Each agent pulls from different sources with different schemas and update frequencies. AI Hub resolves this by providing a single coherent access layer, making orchestration possible without the fragmentation that undermines it.

With identity, shadow agents, and data sovereignty emerging as first-tier risks in 2026, AI Hub’s governed access model ensures agents only access data they are authorized to access — with a full audit trail of what was accessed, when, and by which agent.

Ready to make your enterprise data agent-ready? See how Beam Data AI Hub gives your orchestration layer the foundation it needs to operate reliably at scale. Explore AI Hub →

Where This Goes Next

The next frontier is cross-enterprise agent networks — what IBM and NVIDIA are calling the Internet of Agents. Orchestrated AI systems from different organizations collaborating securely across boundaries, with interoperability standards like MCP, ACP, and A2A competing to define how that collaboration works.

Gartner projects that by 2028, 58% of business functions will have AI agents managing at least one process daily. That makes orchestration infrastructure as foundational as cloud — and the data layer that underpins it equally critical.

The real race is not about having more agents. It is about who has the cleanest data, the tightest governance, and the most reliable orchestration layer. That combination compounds. Start building it now.

Frequently Asked Questions

What is the difference between an agentic workflow and multi-agent orchestration?

An agentic workflow is the design pattern — a single AI agent iterating through planning, action, and self-correction to complete a goal. Multi-agent orchestration is the coordination layer that manages multiple specialized agents working together on complex enterprise tasks. Orchestration is what makes agentic workflows scalable.

Why does data quality matter so much for agentic AI?

Agentic systems amplify whatever is in your data. A single bad prompt gives you one bad output. An agent running on inconsistent data produces confident, wrong outputs across every step of an automated workflow — at scale and at speed. Data quality is not a prerequisite in the nice-to-have sense. It determines whether your agentic deployments produce value or produce risk.

What is a governance framework for agentic AI?

A governance framework defines which agents exist in your environment, what data and systems they can access, what level of autonomy they operate at, and how their actions are logged and audited. It maps workflows to the right autonomy tier — from human-in-the-loop through to fully autonomous — and establishes policies for handling shadow agents, edge cases, and escalation paths.

How does Beam Data AI Hub support multi-agent deployments?

Beam Data AI Hub provides the data foundation layer — clean, governed, structured enterprise data accessible via secure APIs. This ensures that every agent in your orchestrated system works from the same trusted source of truth. It eliminates the data fragmentation that causes context collapse and hallucination amplification, and provides a full audit trail for governed access.

What orchestration frameworks should enterprises evaluate?

The leading options as of 2026 include LangGraph, CrewAI, Microsoft AutoGen, and the OpenAI Agent SDK. The right choice depends on your technical maturity, existing infrastructure, and governance requirements. The more important decision is to architect for interoperability from the start — avoiding vendor lock-in as standards like MCP, ACP, and A2A continue to evolve.

Is full automation the goal of agentic AI?

No. Controlled autonomy is the goal. The most effective enterprise deployments combine dynamic AI execution with deterministic guardrails and human judgment at key decision points. Full automation is appropriate for well-governed, low-risk, high-volume workflows. High-stakes or novel decisions should retain human oversight. Sequencing the rollout of autonomy by workflow risk level is the approach that consistently succeeds.