Key Takeaways

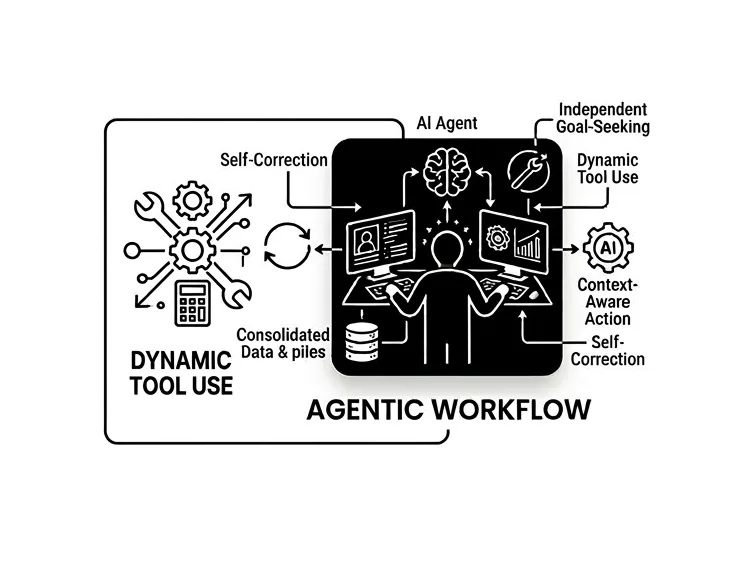

- An agentic workflow is an iterative AI loop — not a one-off prompt

- Agents plan, act, use tools, and self-correct until a goal is reached

- This shifts prompt engineering from chasing output quality to defining behavioral guardrails

- Multi-agent workflow design is the foundation of scalable enterprise AI automation

- The real business value isn’t smarter answers — it’s autonomous processes that run without constant human input

What is an Agentic Workflow?

An agentic workflow is a design pattern where a Large Language Model moves through an iterative cycle of planning, action, and self-correction to complete a goal. Unlike a standard prompt — which produces a single output — an agentic workflow lets AI use external tools, evaluate its own results, and refine them autonomously. The result is a system that operates, not just responds.

Why the “Chatbot” Mental Model No Longer Works

Most enterprise leaders were introduced to AI through chat interfaces. You type a question, you get an answer. Clean, simple, and — for anything genuinely complex — deeply limited.

That model made sense for AI’s first act. It doesn’t make sense anymore.

The organizations seeing real productivity gains aren’t using AI to answer questions faster. They’re using it to run processes autonomously — processes that used to require a human to quarterback every step. That’s the core idea behind agentic design, and it’s a meaningful shift in how you think about what AI is actually for.

How Agentic AI Differs from Standard Prompting

The easiest way to understand the difference is to look at what happens when something goes wrong.

In a standard AI setup, if the output is wrong, you fix your prompt and try again. The AI has no awareness that it failed. In an agentic system, the AI identifies the failure itself, diagnoses why it happened, and corrects it — without you intervening.

Here’s how the two approaches compare across the dimensions that matter most in an enterprise context:

| Feature | Standard AI (Zero-Shot) | Agentic Workflow (Iterative) |

| Process | Linear: Input → Output | Iterative: Input → Plan → Act → Reflect → Output |

| Reasoning | Predictive (Best guess) | Strategic (Step-by-step logic) |

| Prompt Focus | Output Quality | Behavioral Guardrails |

| Self-Correction | No | Yes (Identifies and fixes its own errors) |

The practical implication: standard AI is a capable assistant. Agentic AI is closer to a capable employee.

The 4 Design Patterns Behind Every Agentic System

When you look under the hood of most enterprise agentic deployments, you’ll find the same four patterns doing the heavy lifting. These aren’t theoretical — they’re the building blocks your team will configure when you move from pilots to production.

1. Reflection (The Self-Editor)

The agent reviews its own output before surfacing it. In a code generation context, this means the agent writes the code, runs it mentally, spots the bug, fixes it, and only then presents a result. This single pattern is responsible for most of the accuracy gains you’ll see cited in agentic AI benchmarks.

2. Tool Use (The Specialist)

No LLM is good at everything. Agentic systems are designed to know their own limits. When precision matters — running a calculation, querying a live database, pulling current market data — the agent reaches for the right tool rather than guessing. This is what separates agentic systems from glorified autocomplete.

3. Planning (The Architect)

Complex goals don’t have single-step solutions. A planning-enabled agent breaks a high-level objective into a logical sequence of sub-tasks, executes them in order, and adapts when earlier steps produce unexpected results. Think of it as the AI acting as its own project manager.

4. Multi-Agent Collaboration (The Digital Team)

This is where ai agent orchestration tools earn their value. Rather than a single generalist agent trying to do everything, a multi-agent workflow assigns specialized roles — one agent researches, one writes, one fact-checks. Each operates in its lane. The result is faster, more accurate, and far more scalable than any single-agent approach.

What a Multi-Agent Workflow Actually Looks Like in Practice

Theory aside, here’s what this looks like running in the background of a real enterprise data operation:

Scenario: Automated Data Integrity Workflow

- Trigger — A new dataset lands in your pipeline

- Analysis — An agent scans for missing values, formatting inconsistencies, and outliers

- Correction — A second agent writes a cleaning script, runs it, and validates the fix

- Reporting — You receive a notification: “Dataset processed. 12 errors found and corrected automatically.”

No ticket. This means no manual review queue. No analyst pulled off higher-value work to babysit an import.

That’s not a future state — it’s what properly configured agentic systems are doing today.

What This Means for Prompt Engineering

There’s a common assumption that agentic AI makes prompt engineering obsolete. It doesn’t. It makes it more consequential.

In a standard setup, prompt engineering is about squeezing a better answer out of a single interaction. In an agentic context, you’re not writing prompts — you’re writing the operating instructions for an autonomous system. The stakes are higher, and the skill set evolves accordingly.

A useful way to think about it:

- Standard prompting: You’re telling the AI to be a writer

- Agentic system design: You’re telling the AI to be an editor-in-chief who knows when to delegate to a writer, when to call a researcher, and how to reject a draft that doesn’t meet the brief

The discipline is shifting from prompt engineering to system engineering — from perfecting a single question to designing the logic that governs an entire operational loop.

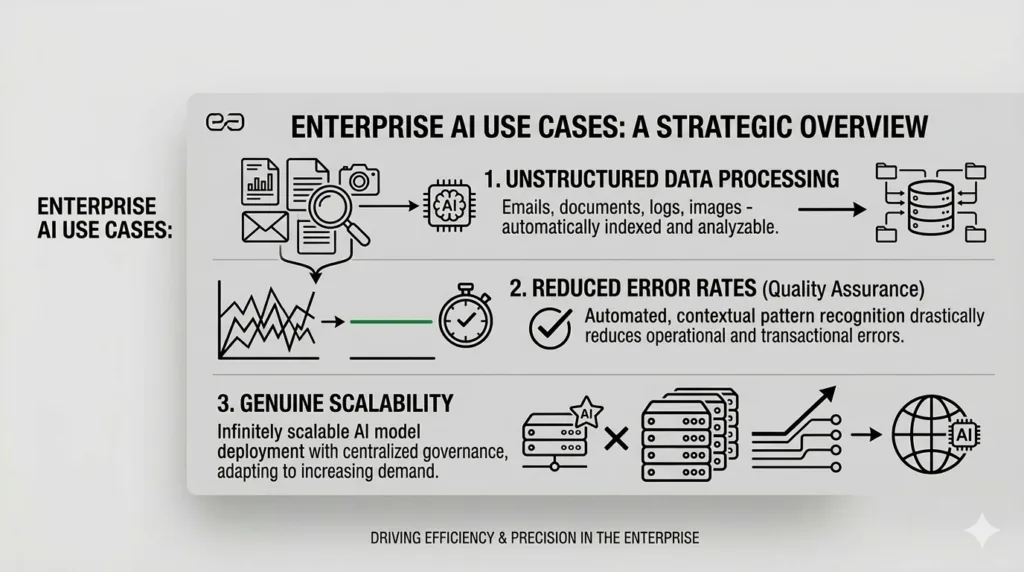

The Enterprise Case: Where Agentic AI Creates Real Value

The business value isn’t abstract. Agentic workflows are particularly well-suited to the parts of enterprise operations that have always resisted automation — the messy, judgment-dependent, multi-step work that standard RPA tools can’t touch.

Unstructured data processing — Agents can read PDFs, parse call recordings, and convert them into structured database entries without human transcription.

Reduced error rates — Because agents reflect and verify their own work, accuracy improves significantly compared to single-pass AI outputs. The self-correction loop catches what a one-shot prompt misses.

Genuine scalability — Instead of adding headcount to handle growing data volumes, you extend the agentic loop. The system scales with your business; your org chart doesn’t have to.

The net effect is a shift in how humans interact with these systems. You move from being the person doing the work to being the person approving work the system has already completed and validated. That’s a meaningful change in leverage.

The Road Ahead: What Agentic AI Looks Like in the Near Term

A few shifts are already underway that enterprise leaders should be tracking:

From Copilots to Operators — The tools that suggest your next sentence are giving way to systems that execute multi-step tasks across your software stack without being asked twice.

Agentic Departments — Early adopters are building functions where agents handle the majority of repetitive workflow volume, with humans focused on exceptions, edge cases, and final approvals.

System Design as a Core Skill — The ability to architect agentic workflows — defining how an agent thinks, what tools it can access, and where it must escalate — is becoming a foundational capability for every team that touches data or operations.

Create AI Workflows with Beam Data

The shift from prompts to processes isn’t a technical upgrade — it’s a different theory of what AI is for. Standard AI makes individuals more productive. Agentic AI makes systems more autonomous.

For enterprise organizations, that’s the distinction that determines whether AI becomes a line item or a competitive advantage.

Ready to eliminate AI sprawl and put your workflows on autopilot? Explore our AI Orchestration Platform and see how Beam AI Hub unifies your enterprise data and agent infrastructure in one secure layer.

FAQ

1. What is the difference between prompt engineering and an agentic workflow?

Prompt engineering is the act of writing instructions. An agentic workflow is the system that follows those instructions iteratively — planning, acting, and self-correcting — until a goal is fully achieved.

2.What is a multi-agent workflow?

A multi-agent workflow is a design pattern where multiple specialized AI agents collaborate on a task, each handling a distinct role — research, writing, verification — rather than a single generalist agent attempting everything at once.

3.What are AI agent orchestration tools?

These are platforms that manage how multiple agents communicate, hand off tasks, and coordinate toward a shared goal. They provide the infrastructure layer that makes multi-agent workflow deployments reliable at enterprise scale.

4.How does Beam Data support agentic workflows?

Agentic systems are only as reliable as the data they operate on. Beam Data AI Hub provides the infrastructure to ensure your enterprise data is clean, structured, and agent-ready — so your agentic loops produce accurate outputs, not confident hallucinations.

5.What is the primary business benefit of agentic AI?

Autonomy. The ability to run complex, multi-step processes without constant human prompting — saving significant manual effort and freeing teams to focus on decisions that actually require human judgment.