It wouldn’t be surprising by now to hear that AI is slowly becoming a part of every organization. In 2025 alone, a staggering 78% of global organizations reported adopting AI technologies. According to McKinsey’s State of AI report, with enterprise-scale deployments surging by 25% year-over-year. PwC echoes this, projecting that AI could add $15.7 trillion to the global economy by 2030. Yet, this rapid embrace comes with a hidden caveat: poor AI governance.

Poor AI governance shouldn’t be surprising given how fast organizations jump into the AI bubble without properly researching and adopting AI to their organizations needs. With the rush to adopt AI models, silently leakages start to happen: shadow AI deployments, compliance blind spots and hidden risks that could undo the benefits gained by the AI adoption. It is like a ticking time bomb that can go off anytime.

In this article, we aim to examine AI governance through multiple lenses to understand what AI governance means, operational causes, common failure patterns, real-world case studies, and practical frameworks. It also highlights the ethical and legal liabilities organizations face when they treat governance as an afterthought rather than a strategic capability.

What is AI governance?

Enterprise AI governance is the framework of rules, processes and guidelines that ensures artificial intelligence systems are developed, used and managed responsibly. It aims on core principles like safety, security, fairness and compliance with the regulations to mitigate and contain risks attached with using AI.

Why does governance fail?

Three main reasons explain why AI governance typically emerges within organizations:

- Operational Silos: Models often develop multiple issues, especially when teams train them on outdated datasets that produce outdated recommendations.. Or there is divergence between the user and the vendor thus two different visions. Such complexities within handling the AI models cause leakages long term.

- Organization Fragmentation: Not everyone within the organization may have the skills or take an active role in managing and supervising AI model development.. Soiled teams give rise to AI sprawl increasing exposure to risks attached to using different tools to complete one process.

- Innovation Fear Trap: Companies might fear compliance altogether because of the restrictive nature of rules and regulations. This fear might hinder the adoption of rules to keep the development of AI intact.

If your team is quick to ignore AI governance like another facade then there are consequences that you should be prepared to face. Some of these consequences include reputational damage, erosion of core competencies and possible litigation (if it crosses bias against any individual). In a world where AI adopters outpace laggards by 2.5x in market share, poor governance isn’t negligence. It’s competitive suicide.

5 Most Common AI Governance Failures

Across industries, recurring patterns define modern AI governance failures some of which include:

- Black Box Transparency

Enterprises deploy models whose output cannot be traced to a definitive standard to measure against which creates uncertainty. A lack of explainability leaves the company legally defenseless. It is best to be aligned with AI operational governance practices to prevent this from happening.

- Shadow AI & Data Leakage

Employees frequently use external tools without visibility from IT or compliance teams. This creates undocumented workflows. This is a major contributor to AI governance implementation challenges and one of the biggest challenges of governing AI at scale.

- Ownership Gap

Many firms lack a definitive person to account for the processes that take place in such centers. This lack of transparency leads to fragmented accountability when a model malfunctions. Unclear accountability is an important leading factor behind AI governance challenges and solutions discussions today.

- Data Lineage Failures

Without being aware where the data is from, being used, companies cannot prove compliance with rules and regulations like the EU AI Act.

- Missing AI Risk Appetite

Many organizations adopt AI without defining how much risk they are able to digest. With the level of appetite uncertain, it is very much possible that the AI systems acts out in a way that may not sit well with the stakeholders.

Real Life Case Studies of AI Failures

- Paramount Subscriber Data Scandal

Paramount faced a $5 million class-action lawsuit after its AI-driven personalization engines were found to have mishandled subscriber data. Weak data lineage caused the failure because the company could not verify that it had collected training data with proper consent, which led to a massive privacy violation.

- Apple Card Gender Bias Controversy

Though it began in late 2019, the Apple Card scandal (issued by Goldman Sachs) remains the gold standard for “Black Box” failures. Despite claims that the algorithm was “gender-blind,” it frequently gave men 20x higher credit limits than their wives with shared assets. The failure wasn’t just the bias. It was the inability of the bank to explain why the AI reached those conclusions, proving that “blind” models often pick up on “proxy variables” (like shopping habits) that mirror historical discrimination.

How to Build Smarter AI Governance Frameworks

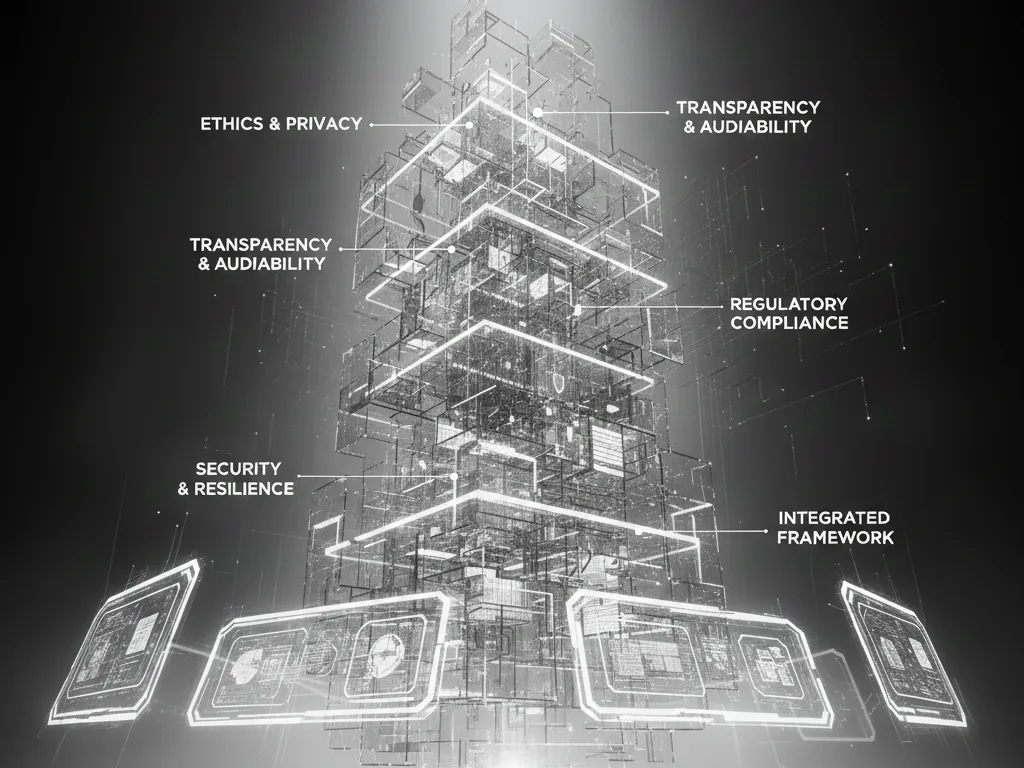

Organizations addressing AI governance challenges successfully are shifting toward structured frameworks. The five governance pillars are:

1. AI Organization

Create cross-functional governance teams combining technical leadership, compliance, and executive oversight to address AI for services adoption challenges large enterprises often face.

2. Legal and Compliance Integration

Continuous legal participation reduces exposure to evolving AI regulations and strengthens enterprise readiness.

3. Ethics and Transparency

Establish fairness testing, explainability thresholds, and accountability standards tied to risk levels.

4. Data Infrastructure

Centralized infrastructure enables lineage tracking and scalable governance which are essential for overcoming the challenges of governing AI at scale.

5. AI Protection

Monitoring, validation, and incident response systems transform governance from reactive oversight into operational resilience.

These pillars directly support AI governance improvement by embedding governance into everyday workflows rather than treating it as an external review function. According to AI governance news, companies that treat governance as core function achieve higher value from their investments in AI.

Build Stronger AI Governance with Beam Data

The challenges of governing AI at scale are nearly impossible to manage with manual working. Beam Data’s AI hub provides a holistic solution for organizations to maintain all data and tools in one place which addresses AI governance improvement needs.

Ready to turn your AI governance from a risk into a competitive advantage. Explore Beam Data’s AI Hub here and contact us today to supercharge your AI journey,

Frequently Asked Questions (FAQs)

1. How does AI affect data privacy and governance?

AI can compromise privacy by leaking datasets that contain sensitive information that should not be shared. Enterprise AI governance ensures that data flows are mapped with privacy control.

2. Should AI governance become a board mandate?

Yes, because AI decisions now create financial, legal, and reputational risk. Board oversight aligns governance with enterprise strategy and accountability.

3. How can we ensure AI is used ethically?

Organizations must implement bias testing, transparency standards, and human oversight. Organizations must embed ethics directly into workflows instead of documenting them only as policy.

4. How can organizations control AI sprawl while staying compliant?

Centralized governance platforms like Beam’s AI Hub for enterprise teams help consolidate AI usage, data access, and compliance monitoring. This allows teams to innovate while maintaining enterprise-grade governance controls.