Key Takeaways

- Standalone LLMs are not failing because of capability — they are failing because of coordination. Without AI orchestration platforms, AI investments fragment.

- AI orchestration has moved beyond simple triggers and webhooks. In 2026, it means governing multi-agent workflows, model selection, and data access from a single control plane.

- AI Hub by Beam Data is built as an AI agent orchestration platform— a governed, industry-aware platform for enterprises that need more than a developer framework can offer.

- The ‘build your own’ approach consistently underestimates the cost of security, observability, and maintenance at production scale.

- Model agnosticism is the strategic requirement: the platform must outlast whichever LLM is currently winning benchmarks.

I. The Rise of the Orchestration Layer

Most enterprise AI initiatives in 2025 followed the same pattern: a team identifies a promising use case, spins up an LLM-powered tool, sees early results, and then quietly stalls. The model performs well in isolation but it is the lack of an AI orchestration and automation platform to coordinate workflows, data, and governance.. The problem is everything around it — the data it cannot reliably access, the workflows it cannot coordinate, the governance requirements it cannot satisfy, and the audit trail that does not exist.

This is the fragmented AI problem. It is not a model problem. It is an infrastructure problem specifically the absence of a enterprise ai orchestration platform..

Gartner projects 40% of enterprise applications will embed task-specific AI agents by end of 2026. The constraint on that number is not model availability — it is the absence of a coordination layer that can govern those agents reliably.

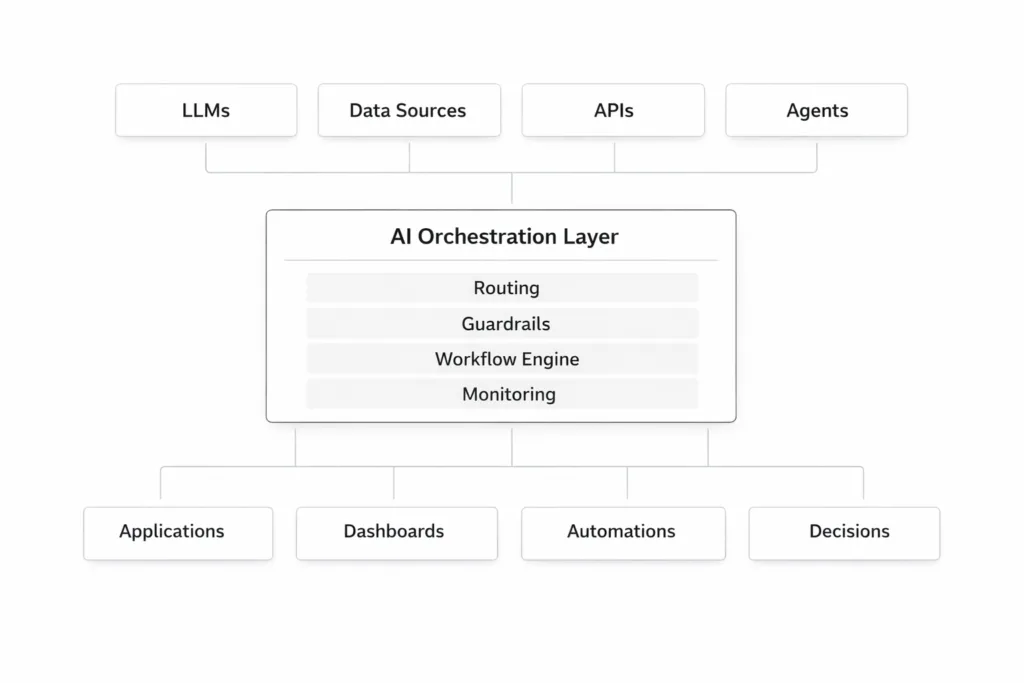

AI orchestration is the answer to that constraint. In its most basic form, orchestration routes tasks between AI components. In its mature enterprise form, it is the layer that manages multi-agent workflows, enforces governance policies, handles data access across ERP and CRM systems, and provides the observability that makes AI deployable in regulated environments. The gap between those two definitions is where most enterprises are currently stuck.

II. AI Hub by Beam Data: The Unified Control Plane

Most AI platforms force a choice: developer flexibility or enterprise governance. AI Hub by Beam Data is built on the premise that enterprises need both, and that neither should require compromising the other. AI Hub is designed to deliver both — functioning as a full ai orchestration platform for team scaling across departments.

One governed platform, not a stack of point solutions

AI Hub unifies model access, data pipelines, agent workflows, and governance controls into a single platform. Where most enterprise AI stacks require separate tools for orchestration, observability, data connectivity, and security, This eliminates fragmentation and introduces true ai orchestration platform scalability features required for enterprise-wide deployment.

This matters practically. Organizations with fragmented AI stacks spend significant engineering effort on the connective tissue between tools rather than on the workflows those tools are meant to power. Consolidation removes that tax.

Solving the experimentation gap

The most common failure mode in enterprise AI is not a failed pilot — it is a successful pilot that never reaches production. Teams build promising proofs of concept on informal infrastructure that cannot satisfy security review, cannot scale reliably, and cannot be audited. AI Hub addresses this directly by providing a standardized deployment path from day one, rather than requiring a rebuild when the experiment is ready to operationalize.This is critical for organizations evaluating AI orchestration platform suitability for non-technical users, where simplicity and governance must coexist.

Enterprise-grade deployment, including on-premise

AI Hub supports full on-premise and private VPC deployment, a requirement that is non-negotiable in financial services, healthcare, and public sector environments. Data processed through AI Hub workflows does not leave the security boundary. This is not a configuration option retrofitted onto a cloud-first architecture — it is a design principle.

Verticalized intelligence for faster time to value

Rather than requiring every enterprise to build industry context from scratch, AI Hub ships with verticalized intelligence for Manufacturing, Finance, and EdTech. Pre-built agents and workflow templates encode the domain logic that would otherwise require months of prompt engineering and fine-tuning to develop. For enterprises in those sectors, this meaningfully reduces time-to-value on the first deployment.

III. Why Your Enterprise Needs a Dedicated Platform

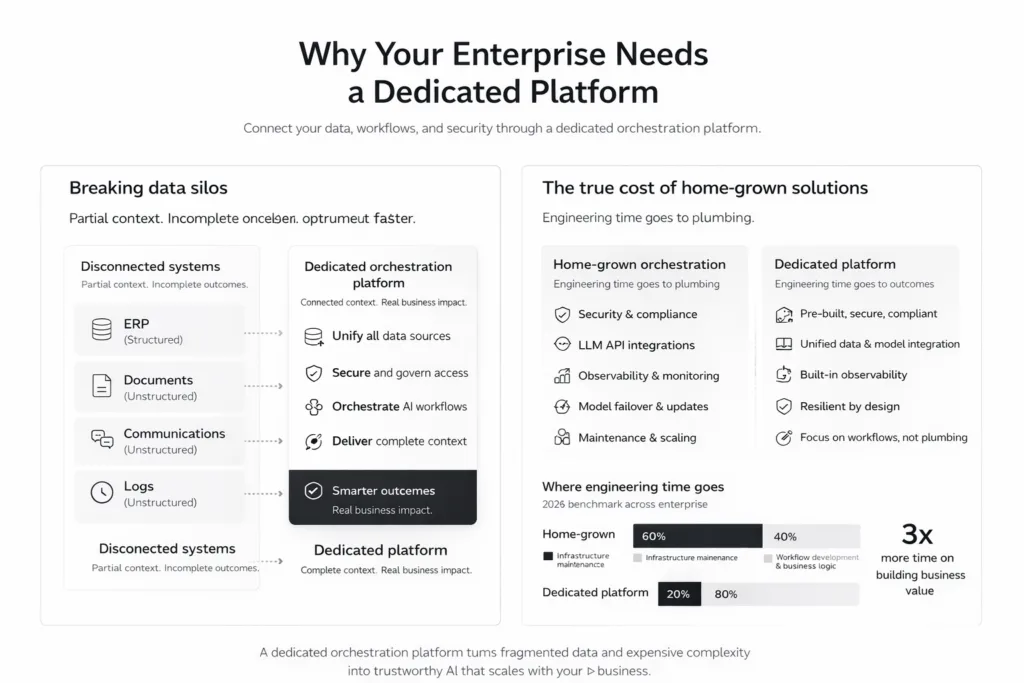

Breaking data silos

Enterprise AI workflows almost always require data from multiple systems — structured data from ERP and CRM platforms, unstructured data from documents, communications, and logs. Most LLM frameworks handle one or the other well. A dedicated orchestration platform is architected to bridge both, connecting the reasoning capabilities of AI models with the structured record systems that run the business.

Without this bridge, agents operate on partial context. A procurement agent that can query invoices but not cross-reference supplier risk data from the ERP will produce recommendations that are technically coherent but operationally incomplete. The orchestration layer is what makes the context complete.

The real cost of home-grown solutions

Custom-built orchestration layers are common in early enterprise AI programs and expensive in mature ones. The engineering effort required to maintain security compliance, keep pace with evolving LLM APIs, implement observability, and handle model failover is consistently underestimated at the start and consistently felt at scale.

A 2026 benchmark across enterprise AI teams found that organizations relying on custom orchestration layers spent up to 60% of AI engineering time on infrastructure maintenance rather than on building or improving workflows. A dedicated platform shifts that ratio.

IV. Core Capabilities: What to Look For

Deterministic vs. probabilistic control

Reliable enterprise workflows require both. Deterministic controls — rigid business rules, approval gates, compliance checks — ensure that AI systems behave predictably on the dimensions that cannot tolerate variation. Probabilistic AI reasoning handles the judgment-dependent steps that rules-based automation cannot reach. An effective orchestration platform must support both modes in the same workflow, with clear boundaries between them.

Multi-agent coordination

The most capable enterprise deployments in 2026 use coordinated teams of specialized agents rather than single generalist models. A supply chain workflow might involve a data retrieval agent, a risk assessment agent, a reporting agent, and an escalation agent — each fine-tuned for its function, coordinated by an orchestrator. Frameworks like LangGraph and AutoGen provide the underlying coordination mechanisms; an enterprise platform layers governance, observability, and data access on top.

Human-in-the-loop (HITL) controls

Full automation is the goal for high-volume, low-risk processes. For high-stakes decisions — credit approvals, compliance exceptions, significant procurement actions — human approval gates are not optional. An enterprise orchestration platform must make HITL workflows first-class citizens: easy to configure, visible in audit logs, and able to pause and resume agent pipelines without data loss.

V. 2026 Market Landscape: A Quick Comparison

The orchestration market breaks into four categories. Understanding where each fits helps clarify why platform selection cannot be made on model performance alone.

| Category | Platform | Core Strength | Deployment |

| Governed Foundation | AI Hub (Beam Data) | Unified governance, multi-agent orchestration & industry-specific agents | VPC / On-Prem / Cloud |

| Enterprise Native | UiPath Maestro | Strong for legacy RPA integration and task-heavy automation | Hybrid |

| Developer-First | LangGraph / AutoGen | Maximum flexibility for engineering teams building custom agent pipelines | Self-Hosted |

| Cloud-Native | Google Vertex AI | Deep GCP ecosystem integration and A2A protocol support | Public Cloud |

The distinction between these categories is not about capability ceiling — it is about what the organization owns and maintains. Developer frameworks offer maximum flexibility but require the enterprise to build and sustain the governance, observability, and security layers. Enterprise platforms make different trade-offs in favor of operational reliability over architectural freedom.

VI. Key Evaluation Criteria

Security and data sovereignty

PII redaction, prompt shielding, and data residency controls are table stakes in 2026 — not differentiators. The evaluation question is not whether a platform has these controls, but whether they are enforced at the infrastructure level or depend on correct configuration by each implementation team. Infrastructure-level enforcement is more reliable and easier to audit.

Integration depth and MCP support

The Model Context Protocol (MCP) is emerging as the standard for how AI agents access enterprise data and tools. Platforms with native MCP support allow agents to discover and use data sources dynamically rather than requiring hardcoded integrations. This matters operationally: as enterprise data environments change, MCP-compatible agents adapt without requiring workflow rebuilds.

Observability and cost tracking

Audit trails, token usage tracking, and cost attribution by workflow are requirements in any enterprise AI deployment that will face scrutiny from finance, compliance, or IT governance teams. An orchestration platform that cannot answer ‘which agent accessed which data, when, and at what cost’ will not survive the governance review that precedes production scale.

VII. From Pilot to Production: A 90-Day Strategy

Step 1: Identify orchestration-ready use cases

Not all workflows benefit equally from multi-agent orchestration. The highest-value starting points share common characteristics: they involve multiple steps, require data from more than one system, currently depend on human coordination between those steps, and have clear success metrics. Automated claims processing and supply chain insights are canonical examples — high volume, well-defined inputs and outputs, measurable error rates.

Step 2: The build vs. buy decision

The relevant question is not whether the engineering team could build a custom orchestration layer — most can. The question is what that decision costs over three years, not three months. Custom layers require ongoing maintenance as LLM APIs evolve, security standards update, and workflow requirements change. For organizations without a dedicated AI platform engineering team, a managed platform consistently delivers better total cost of ownership.

Step 3: The 90-day agentic strategy

A reliable implementation pattern across successful enterprise deployments follows four phases:

- Trigger — Define the workflow entry point and the conditions that initiate agent execution.

- Execute — Configure the agent pipeline, data connections, and tool access for the target workflow.

- Verify — Implement automated validation at key handoff points and set human review thresholds for edge cases.

- Log — Establish audit trails, cost tracking, and outcome metrics before moving to production scale.

Organizations that follow this sequence consistently report faster production timelines and fewer post-deployment governance issues than those that defer verification and logging to a later phase.

VIII. Future-Proofing Your AI Stack with Beam Data’s AI Hub

The LLM that is winning benchmarks today will not be the one winning benchmarks in eighteen months. The orchestration platform that ties your enterprise workflows to a specific model — or a specific cloud provider — is creating technical debt at the point where it will be most expensive to resolve.

Model agnosticism is the architectural requirement that protects the investment. An orchestration platform should abstract away the model layer, allowing enterprises to swap models as the market evolves without rebuilding the workflows that sit on top.

AI Hub by Beam Data is model-agnostic by design. The workflows, governance controls, and data connections your team builds today are not dependent on the current leading LLM. That is the definition of infrastructure that compounds rather than depreciates.

The broader shift is from AI as a tool to AI as a workforce. Individual LLMs are useful instruments. Coordinated, governed, observable networks of agents — operating on clean data, within defined guardrails, with full audit trails — are operational infrastructure. The enterprises investing in the orchestration layer now are not just solving an immediate productivity problem. They are building the foundation for how work gets done.

Frequently Asked Questions

1.What is an AI orchestration platform?

An AI orchestration platform coordinates AI models, data pipelines, and agent workflows. It manages routing, governance, and observability to enable reliable, production-scale automation.

2.How is AI Hub by Beam Data different from LangGraph or AutoGen?

LangGraph and AutoGen are developer frameworks that require teams to build governance, security, and integrations themselves. Beam Data AI Hub is a full enterprise platform that provides these capabilities natively, along with on-premise deployment, industry-specific agents, and a standardized path from pilot to production.

3.What is the Model Context Protocol (MCP) and why does it matter?

MCP is a standard that enables AI agents to dynamically access enterprise data and tools. It allows systems to adapt to changing data environments without rebuilding integrations.

4.When should a company build vs. use a platform?

Custom builds suit organizations with dedicated platform engineering teams and highly unique requirements. For most enterprises, platforms are more cost-effective as infrastructure maintenance quickly outweighs initial flexibility.

5.Does AI Hub by Beam Data support on-premise deployment?

Yes. Beam Data AI Hub supports full on-premise and private VPC deployment, ensuring sensitive data remains within enterprise boundaries. This is a core architectural design, not an add-on.

6.What are orchestration-ready use cases?

These are multi-step workflows requiring data from multiple systems and clear success metrics. Beam Data AI Hub commonly supports use cases like claims processing, supply chain management, and compliance workflows.