Table of Contents

- The Short Answer

- Why This Is a CTO Problem, Not a Data Team Problem

- The Regulatory Stack: Four Frameworks in Scope

- Where Agentic AI Delivers Real Value in Financial Services

- The Four Governance Questions Every Financial Services CTO Must Answer

- The Vendor Concentration Problem Nobody Is Talking About

- Vendor Governance Checklist: Eight Questions Before Signing an Agentic AI Contract

- FAQ

TL;DR

- Agentic AI in financial services data pipelines triggers obligations under four distinct regulatory frameworks simultaneously: SOX, BCBS 239, SR 11-7, and DORA. No single framework covers the full exposure.

- The accountability for autonomous agent decisions sits at the CTO level, not the data team level — agent behavior in regulated pipelines is a control, and controls are owned by leadership.

- SR 11-7 was written for static, deterministic models. Agentic AI is dynamic. The framework strains at vendor-side model updates, validation scope, and explainability — and banks must resolve these gaps before deployment.

- Vendor diversity at the contract layer can disguise infrastructure concentration risk. Most production agentic AI platforms ultimately run on two or three hyperscaler AI infrastructures. DORA concerns itself with exactly this scenario.

- The governance questions in this piece are designed to take into a board risk committee or vendor evaluation process. They are not aspirational — they are the minimum required before autonomous agents operate in regulated pipelines.

Autonomous AI agents are entering financial services data pipelines. They are routing transactions, flagging anomalies, aggregating risk data, and drafting SAR narratives — and the governance challenge for agentic AI data pipeline deployments in financial services is not whether the technology works, but who is accountable when it does. The data teams deploying these systems are focused on capability. The question this piece addresses is different: what are you, as the CTO, responsible for when an autonomous agent makes a consequential decision in a regulated pipeline?

The Short Answer

Agentic AI in financial services data operations refers to autonomous systems that plan multi-step workflows, make decisions based on runtime context, and take actions — including data transformation, routing, and downstream triggers — without step-by-step human instruction. Unlike traditional ETL or RPA, agentic systems adapt to conditions at runtime: detecting schema changes, routing anomalies to remediation workflows, and escalating to human review based on configurable thresholds. For financial services CTOs, this distinction has direct regulatory implications: autonomous agent decisions are subject to SOX audit trail requirements, BCBS 239 data quality principles, SR 11-7 model risk management obligations, and DORA third-party resilience requirements.

Why This Is a CTO Problem, Not a Data Team Problem

Traditional pipeline automation has a clean governance story. ETL tools execute static, documentable transformation logic. A developer writes the code, an architect reviews it, an auditor samples the output, and the process is repeatable. The control is the code.

Agentic AI changes this in a specific way: the decisions are no longer in the code. They are in the agent’s runtime behavior, shaped by context, by the underlying foundation model, and by the conditions present at the moment of execution. Two runs of the same pipeline may produce different transformation decisions. That is not a bug — it is the design. It is also the governance problem.

When an autonomous agent makes a data transformation decision that feeds a financial statement, that decision is a control. SOX Section 404 requires internal controls over financial reporting. The CTO is accountable for those controls — not through technical implementation, but through the governance framework that determines what the agent is permitted to do, how its decisions are logged, and who reviews the edge cases. The data team builds the pipeline. The CTO owns the control environment.

The board-level signal is already present. The SEC identified AI governance as a 2025 examination priority. Per SafePaaS’s 2026 analysis, every autonomous AI agent operating in a financial reporting context creates a SOX exposure that requires board-level documentation. Boards are not asking CTOs for deployment plans. They are asking for governance narratives: who approved this, what can it do without human review, and what is the remediation process when it produces an incorrect output?

The answer to those questions is a CTO deliverable, not a data team deliverable. If the answer does not exist, the deployment should not proceed.

The Regulatory Stack: Four Frameworks in Scope

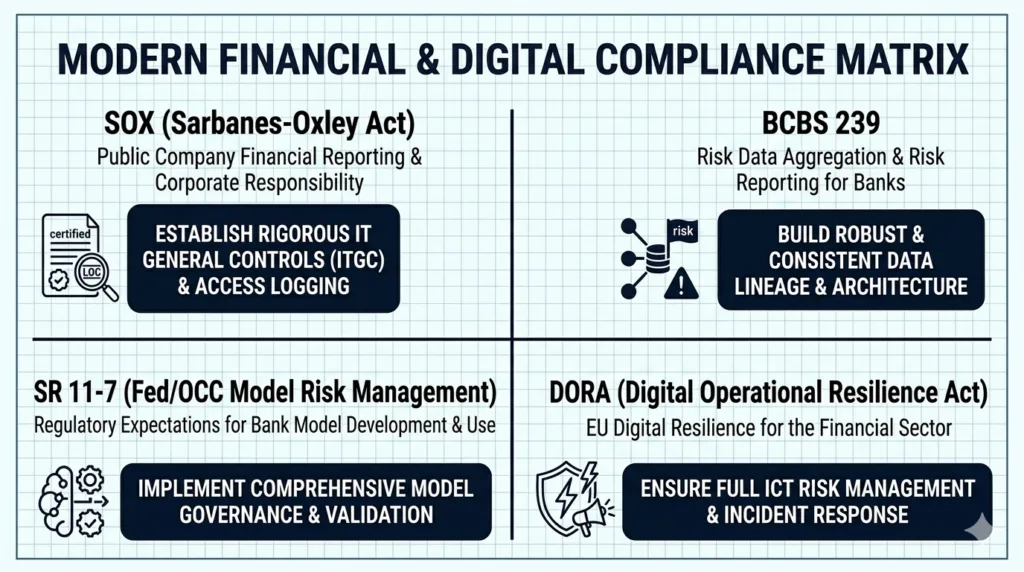

The gap in current coverage of agentic AI compliance in financial services is the combined picture — all four frameworks simultaneously, and what each one specifically demands of the CTO. This section provides it: each framework, its specific implications for agentic pipelines, and what it requires from you.

SOX and Data Lineage

SOX Section 404 mandates internal controls over financial reporting. Any data transformation that feeds a financial statement is in scope. What is new is that agentic AI makes the control harder to document and maintain.

Traditional ETL has deterministic logic: the transformation is defined in code, documented in a data dictionary, and testable by auditors. Agentic pipelines do not work this way. The agent decides, at runtime, how to route and transform data based on current conditions. The “control” is not a function definition — it is the agent’s learned behavior, which may vary by run, by data volume, and by the state of the underlying model.

What CTOs must build: immutable agent decision logs mapped to SOX assertions — completeness, accuracy, validity, restricted access, cutoff — with an artifact showing what the agent decided, on what data, at what time, and why. Grant Thornton’s 2025 research found that AI-enabled continuous SOX monitoring reduces audit preparation time by up to 40%, but only when lineage is properly mapped. Treat each agent as a “control owner” in SOX control documentation: the agent is not just a tool, it is the entity executing the control.

BCBS 239 — Risk Data Quality in the Agentic Era

BCBS 239 — the Basel Committee’s Principles for Effective Risk Data Aggregation and Risk Reporting — governs how financial institutions aggregate and report risk data. Principles 3 through 6 apply directly to agentic pipelines that enrich, transform, or route risk data: accuracy and integrity (Principle 3), completeness (Principle 4), timeliness (Principle 5), and adaptability (Principle 6).

The tension is genuine. BCBS 239 requires auditability and reproducibility of risk data aggregation. Agentic pipelines introduce variability — the pipeline adapts to schema changes, routes on runtime conditions, and produces outputs that may differ across runs. Unless agent decision logs are treated as first-class data artifacts, BCBS 239 Principle 3 is structurally at risk.

The argument for agentic pipelines comes from Principle 5: timeliness. BCBS 239 demands near-real-time risk data under stress conditions. Quarterly batch pipelines cannot satisfy this requirement. Per Databricks’ 2025 research, agentic AI on governed data platforms enables real-time stress scenario simulation, replacing quarterly batch processing. The path to BCBS 239 Principle 5 compliance runs through agentic architecture — but only if the governance for Principles 3 and 4 is in place first.

SR 11-7 — Model Risk Management for Dynamic Systems

Federal Reserve Supervisory Letter SR 11-7 requires model development documentation, independent validation, ongoing performance monitoring, and effective challenge for any quantitative model influencing bank decision-making. It applies to agentic AI. The problem is that SR 11-7 was written for a world where models are static artifacts.

GARP’s February 2026 analysis, “SR 11-7 in the Age of Agentic AI: Where the Framework Holds — and Where It Strains,” is the definitive treatment of this intersection. The framework’s core requirements remain applicable. The strain points are specific.

The validation scope problem: SR 11-7 assumes a defined model that can be validated as a unit. Agentic systems are typically multi-model — an orchestrator calling a reasoning model calling a retrieval model. What is the unit of validation? This question does not have a regulatory answer. It must be resolved institutionally before deployment.

The vendor update problem: when AWS, Microsoft, or Anthropic updates the underlying foundation model, the agentic system’s behavior may change without any action by the bank. SR 11-7’s change management provisions require documentation and re-validation when a model changes — but the bank may not be notified that the model changed. Requiring change notification clauses in vendor contracts is not optional. It is the mechanism by which SR 11-7’s change management obligations become enforceable.

The explainability requirement: SR 11-7 requires documentation detailed enough that unfamiliar parties can understand the model’s operation. Foundation model-based agents frequently cannot satisfy this in traditional ways. The BPI noted in October 2025 that many banks are applying the full SR 11-7 model risk management inventory process to all AI tools. The operational burden is significant — but the framework applies regardless of implementation complexity.

DORA — Third-Party Resilience for AI Platform Vendors

DORA (EU Regulation 2022/2554, effective January 17, 2025 per EIOPA) applies to all EU financial entities and their ICT third-party service providers. Agentic AI platforms — AWS Bedrock, Azure OpenAI, Salesforce Agentforce, and their peers — are ICT third-party providers in scope.

Four specific obligations are directly relevant. First, registration: every agentic AI platform vendor must be registered in the institution’s ICT third-party register with a full risk assessment and documentation of the vendor’s sub-outsourcing chain. Second, criticality classification: the institution must assess whether the agentic AI system supports “critical or important functions,” which triggers enhanced obligations including more stringent exit strategy requirements and resilience testing. Third, RTO and RPO: defined Recovery Time Objective and Recovery Point Objective targets must exist for AI-driven pipeline components supporting critical functions. Fourth, incident classification: if an autonomous agent causes a pipeline failure, the institution must determine whether the failure meets DORA’s reportable incident criteria.

North American CTOs without EU operations should note the DORA section: any institution with EU-regulated entities carries DORA obligations. Additionally, DORA’s concentration risk provisions are increasingly referenced by North American regulators as a model for AI third-party risk management. This makes the framework’s logic relevant even to institutions without direct EU exposure.

Where Agentic AI Delivers Real Value in Financial Services

The regulatory requirements are real. So is the business case. These use cases are active deployments, and the governance frameworks above are the price of admission.

AML and Fraud Detection Pipeline Automation

Deepfake fraud increased 1,100% in the United States between 2025 and 2026, per FinTech Wrapup’s 2026 reporting. The manual triage model — alert queues, investigator review, SAR filing running days to weeks per case — is not viable at that volume.

Agentic AML architectures replace the queue with a pipeline. An alert triage agent scores and prioritizes incoming alerts using real-time risk signals while an evidence gathering agent retrieves transaction records, customer profiles, AML registries, and external watchlists autonomously. An investigation synthesis agent produces a structured case overview. A SAR drafting agent generates the narrative. Human review remains required before filing. For data team implementation patterns, see the agentic AI data pipeline automation guide.

The CTO-specific obligation is explicit: every agent action in an AML pipeline is a BSA/AML examination artifact. Agent decision logs are not optional. The examination will ask what the agent decided, what data it accessed, and what threshold it applied.

Risk Data Aggregation and BCBS 239 Compliance

Real-time risk data aggregation under stress scenarios — what BCBS 239 Principle 5 demands — is structurally difficult with batch pipelines. Agentic pipelines address this directly: continuous monitoring of upstream data sources, dynamic routing when source schemas change, automatic flagging of quality anomalies, and real-time escalation when aggregation thresholds are breached.

Per Databricks’ 2025 work on BCBS 239 compliance, agentic AI on governed data platforms enables real-time stress scenario simulation and concentration risk detection, replacing quarterly batch processing. The concrete outcome: reduced time-to-report for regulatory submissions, fewer manual interventions for pipeline exceptions, and auto-generated audit-ready lineage documentation — if the governance architecture is in place.

KYC Refresh and Client Onboarding

Client onboarding in banking involves more than 45 discrete steps: compliance checks, document review, underwriting, sanctions screening, beneficial ownership verification. Agentic systems can compress this from days to near-real-time while maintaining a complete audit trail — an outcome with particular value for wealth management and institutional banking CTOs managing high-touch client relationships at scale.

Per Accenture’s research, agentic AI freed 20% of claims handler capacity in insurance by automating data gathering and initial case synthesis, reserving human judgment for the cases where it adds the most value. For deployment approaches that preserve your existing data infrastructure, see the stack-preserving deployment overview.

The Four Governance Questions Every Financial Services CTO Must Answer

This section provides the decision framework. Each question maps to one or more of the regulatory obligations above and is designed to be answerable before deployment begins.

- What is the regulatory classification of every decision this agent makes?

- Map each agent action to the applicable framework: Does it feed financial reporting? (SOX — immutable audit trail required.) Does it feed risk assessment or capital calculation? (SR 11-7 — model validation and documentation required.) Does it run on an EU-registered ICT provider? (DORA — third-party register, criticality assessment, exit strategy.) Does it aggregate risk data? (BCBS 239 — data quality principles apply.) An agent operating in an AML pipeline may trigger all four frameworks simultaneously. The mapping must be explicit and documented before deployment. This is the foundation of any agentic AI governance framework for financial services.

- Map each agent action to the applicable framework: Does it feed financial reporting? (SOX — immutable audit trail required.) Does it feed risk assessment or capital calculation? (SR 11-7 — model validation and documentation required.) Does it run on an EU-registered ICT provider? (DORA — third-party register, criticality assessment, exit strategy.) Does it aggregate risk data? (BCBS 239 — data quality principles apply.) An agent operating in an AML pipeline may trigger all four frameworks simultaneously. The mapping must be explicit and documented before deployment. This is the foundation of any agentic AI governance framework for financial services.

- Where does human oversight kick in?

- Current regulation does not mandate human review for every agent action, but it does for consequential decisions: SAR filing, credit decisions, regulatory reporting, any output that directly feeds a board-level disclosure. The criteria for triggering human review must be defined in governance policy, not left to the agent’s configuration. If an agent is making decisions that regulators will examine, the threshold for human intervention must be specified, documented, and tested.

- Current regulation does not mandate human review for every agent action, but it does for consequential decisions: SAR filing, credit decisions, regulatory reporting, any output that directly feeds a board-level disclosure. The criteria for triggering human review must be defined in governance policy, not left to the agent’s configuration. If an agent is making decisions that regulators will examine, the threshold for human intervention must be specified, documented, and tested.

- How do you govern vendor-side model changes?

- When AWS, Microsoft, or Anthropic updates the underlying foundation model, the agentic system’s behavior may change. The bank may not receive advance notice. DORA requires change notification provisions in ICT provider contracts. SR 11-7’s change management provisions treat a vendor model update as a model change under the governance framework — triggering documentation and re-validation obligations. Vendor contracts must include defined lead times for change notification, and the institution must have a re-validation process that can be triggered by a vendor-side update.

- When AWS, Microsoft, or Anthropic updates the underlying foundation model, the agentic system’s behavior may change. The bank may not receive advance notice. DORA requires change notification provisions in ICT provider contracts. SR 11-7’s change management provisions treat a vendor model update as a model change under the governance framework — triggering documentation and re-validation obligations. Vendor contracts must include defined lead times for change notification, and the institution must have a re-validation process that can be triggered by a vendor-side update.

- What is your concentration risk exposure?

- Map which critical pipeline functions depend on which AI infrastructure providers. If more than one critical function depends on the same hyperscaler’s AI infrastructure, there is a single-point-of-failure risk that DORA explicitly concerns itself with. The next section addresses this in detail.

The Vendor Concentration Problem Nobody Is Talking About

Most CTOs evaluate vendor diversity at the contract layer. They use AWS Bedrock for one function, Azure OpenAI for another, and a third-party orchestration platform for a third. On paper, this looks like diversification.

It is not, necessarily. The insight identified in the Swept AI Financial Services AI Risk Management Framework and corroborated by Hogan Lovells’ analysis of vendor update risk: vendor diversity at the contract layer can disguise concentration risk when all “different” vendors ultimately depend on the same two or three hyperscaler AI infrastructures. The vendor contracts are different. The underlying AI inference infrastructure — the hyperscaler services on which most agentic AI platforms ultimately depend — is not.

This is precisely the systemic concern DORA’s concentration risk provisions address. The regulation identifies the scenario where a critical mass of financial entities depends on the same underlying AI infrastructure. Regardless of how many vendor contracts are in place — as a systemic risk that regulators may intervene on.

What this means operationally: the CTO’s concentration risk analysis must operate at the infrastructure layer, not just the vendor layer. Exit strategy documentation must address infrastructure concentration, not just vendor substitution. If the exit strategy from Vendor A assumes migration to Vendor B, but Vendor B depends on the same hyperscaler AI infrastructure as Vendor A, the exit strategy is incomplete.

Vendor Governance Checklist: Eight Questions Before Signing an Agentic AI Contract

The following eight requirements should be present in any agentic AI vendor contract for a regulated financial services environment. These are the minimum required to satisfy the regulatory frameworks above.

- Complete audit log of every agent action, available for regulatory inspection on demand

- Data residency guarantees consistent with applicable regulations (DORA, GDPR, PIPEDA)

- Sub-outsourcing chain documentation — not just the vendor’s name, but the names of their infrastructure providers

- Change notification commitments for underlying model updates, with defined lead time

- Explainability artifacts suitable for SR 11-7 independent validation documentation

- Defined RTO and RPO for AI pipeline components supporting critical or important functions

- Contractual exit and portability provisions — not a termination clause, but a data portability and transition support commitment

- Penetration testing and resilience testing support for DORA TLPT requirements

FAQ

What is agentic AI in financial services data operations?

Agentic AI in financial services refers to autonomous AI systems that go beyond executing predefined tasks. Unlike traditional automation such as RPA or ETL, agentic AI can plan multi-step workflows, make decisions based on runtime context, call external tools and data sources, and adapt its behavior without new human instructions. In data pipeline operations, this means agents that can detect schema changes, route data dynamically, flag anomalies, and initiate downstream actions — all autonomously. The distinction matters for CTOs because autonomous decision-making triggers specific regulatory obligations around model risk management, audit trails, and operational resilience.

What regulations apply to agentic AI in financial services data pipelines?

Four primary frameworks are in scope. SOX requires complete audit trails for any data transformation feeding financial reporting. BCBS 239 sets data quality, completeness, and timeliness requirements for risk data aggregation. SR 11-7 applies model risk management and independent validation requirements to AI models used in risk-sensitive decisions. DORA — the EU Digital Operational Resilience Act, effective January 2025 — requires registration, risk assessment, and exit strategy documentation for AI platform vendors as ICT third-party providers.

Does SR 11-7 apply to agentic AI systems in banks?

Yes. SR 11-7’s model risk management framework applies to any quantitative model used in bank. This includes decision-making, including agentic AI systems that influence risk assessments, credit decisions, or regulatory reporting. The framework’s foundational requirements — independent validation, ongoing monitoring, documentation of logic — remain applicable. A primary challenge of SR 11-7 is that it targets static, deterministic models.. Agentic AI introduces dynamic behavior and vendor-side model updates that strain the framework. Banks must define the unit of validation, build triggered re-validation processes for model updates, and maintain explainability documentation.

What does DORA require for AI data pipeline vendors?

Under DORA, effective January 17, 2025, EU financial entities must treat agentic AI platform providers as ICT third-party service providers. This requires registering the vendor in the institution’s ICT third-party register, assessing whether the AI supports critical or important functions, documenting exit strategies and sub-outsourcing chains, and incorporating AI systems into operational resilience testing.

The regulatory requirements this piece outlines — data residency, immutable audit logs, explainability documentation, and vendor governance — are architectural requirements, not just policy checkboxes. An on-premises or private cloud deployment model, unified governance across agents and data, and contractual controls over vendor-side model changes are the structural conditions under which agentic AI in regulated financial services pipelines can be deployed without creating unmanageable audit exposure.

Beam Data’s AI Hub is design for financial services and other regulated environments where these requirements are non-negotiable. The platform deploys on the client’s own infrastructure — no data leaves the environment — with unified governance across agents, models, data, and workflows, and immutable audit logs that satisfy SOX, SR 11-7, and DORA documentation requirements by design. If you are evaluating agentic AI deployment options for a regulated data environment, speak with the Beam Data team to see how the architecture maps to your governance obligations.