Key Takeaways

- Theoretical governance defines the what. Operational governance enforces the how — through executable protocols, automated monitoring, and real-time guardrails.

- Static policy documents are no longer sufficient. In 2026, EU AI Act enforcement deadlines and rising regulatory scrutiny mean governance gaps are legal liabilities, not just reputational risks.

- The velocity gap is real: enterprise models evolve daily while governance reviews happen quarterly. Automation is the only credible way to close it.

- Only 1 in 5 enterprises has a mature governance model for autonomous AI agents — meaning 80% are scaling AI on unstable ground.

- AI Hub by Beam Data provides the centralized control plane that makes governance operational: audit trails, semantic security, automated guardrails, and cost tracking built into the architecture.

1. Why Is There a Gap Between AI Policy and AI Practice?

Operational Enterprise AI governance is the practical framework used to oversee the deployment and daily management of AI systems. Unlike high-level policy, it focuses on technical controls, continuous monitoring, and risk mitigation. This ensures models remain compliant, ethical, and performant throughout their lifecycle by integrating accountability directly into the engineering and business workflows.

Most enterprises have an AI ethics statement. Very few have an AI operating manual.

The distinction matters more than it used to. In 2025, most governance frameworks were aspirational documents — reviewed quarterly by a legal team, signed by leadership, and rarely consulted by the engineers actually deploying models. That approach was imperfect but survivable. In 2026, it is not.

The EU AI Act’s high-risk system obligations become fully applicable in August 2026. Regulators are now signaling that documentation gaps themselves may constitute violations — not just the incidents they fail to prevent.

The gap between high-level policy and daily engineering reality is what Dataversity calls ‘governance debt’ — the accumulating distance between what an organization says its AI systems should do and what they are actually doing in production. That debt compounds silently until it surfaces in a compliance audit, a biased decision, or a model that has drifted so far from its original training context that its outputs are no longer defensible.

This blog focuses on the specific technical and organizational shifts required to move governance from a document to a capability — and the role a centralized platform plays in making that shift achievable.

2. Theoretical Governance: The Safety Net That Isn’t Catching Anything

Theoretical governance is built on aspirational principles. Fairness. Transparency. Accountability. These are the right values. The problem is that values, on their own, are not enforceable. They do not catch a model that has drifted. They do not flag a prompt injection attempt. It does not produce the audit trail that a regulator requires.

The static vs. dynamic problem

Most governance frameworks are reviewed on a quarterly or annual cycle. Most enterprise AI models evolve continuously — retrained on new data, fine-tuned for new use cases, updated by vendors on schedules that engineering teams may not fully control. The policy that described the model at deployment is often irrelevant to the model in production six months later. Governance that cannot keep pace with model velocity is not governance — it is documentation.

The silo problem

Legal and compliance teams write the rules. Engineering teams build and operate the systems. In most enterprises, these groups share almost no tooling. Legal works in documents and policies. Engineering works in code and pipelines. Without a shared operational layer — a platform that translates policy into enforceable technical constraints — the rules that compliance writes never reach the systems they are meant to govern.

When governance feels like red tape

When governance is purely theoretical, it functions as a speed bump rather than a safety guardrail. Teams route around it because it adds friction without adding protection. Ivanti’s 2026 State of Cybersecurity Report found that only 50% of organizations have formal guardrails in place for AI deployment and operation — a number that has not improved proportionally with the scale of AI adoption.

3. Operational Governance: Making Ethics Executable

Operational governance is not a philosophy — it is an engineering discipline. The shift from theoretical to operational means replacing aspirational language with measurable constraints, manual reviews with automated checks, and siloed ownership with cross-functional accountability.

Automated guardrails and real-time monitoring

The core operational requirement is continuous monitoring: tracking model drift, bias regression, toxicity scores, hallucination rates, and policy violations in real time — not at the next quarterly review. This means setting Population Stability Index (PSI) thresholds that trigger alerts when a model’s input distribution shifts materially from its training baseline. It means automated kill-switch logic: if a toxicity or hallucination rate exceeds a defined threshold, the agent is taken offline without human intervention required.

The Cloud Security Alliance frames this distinction precisely: guardrails protect conversations; governance protects execution. As AI systems move from answering questions to taking actions — querying databases, triggering workflows, modifying records — the risk profile changes. Language filters are insufficient. Execution governance, with centralized policy enforcement and runtime auditability, is required.

Cross-functional AI fusion teams

Effective operational governance cannot live in a single department. The organizations making it work in 2026 have moved ownership to what the outline calls ‘AI Fusion Teams’ — integrated committees spanning Legal, DevOps, and Data, with shared tooling and shared accountability. Each function contributes what the others cannot: Legal provides regulatory context, DevOps owns the pipeline, Data owns model quality. The platform they share is what makes that collaboration enforceable rather than advisory.

Risk-based triage

Not every AI system requires the same level of scrutiny. A recommendation engine for internal knowledge search carries different risk than a model that makes credit decisions or routes patient care. Operational governance applies risk-based triage — categorizing AI projects by impact level and applying proportional controls. High-risk systems get continuous monitoring, mandatory human-in-the-loop gates, and full audit trails. Lower-risk systems get automated checks with lighter oversight. The triage system makes governance scalable; trying to apply maximum scrutiny to every model is how governance programs become bottlenecks.

4. Theoretical vs. Operational: At a Glance

| Feature | Theoretical Governance | Operational Governance |

| Primary Goal | Regulatory Compliance | Risk-Adjusted Performance |

| Documentation | Static Policy PDFs | Living Model Cards & Data Lineage |

| Monitoring | Periodic Manual Audits | Continuous Automated Observability |

| Ownership | Legal / Compliance (siloed) | Shared — IT, Data & Business |

| Agility | Slow (manual approval gates) | Fast (automated safeguards + CI/CD) |

| Risk Response | Reactive — post-incident review | Proactive — drift detection + kill switch |

The pattern across every row is the same: theoretical governance is static, siloed, and reactive. Operational governance is continuous, shared, and proactive. The difference is not intent — most organizations with theoretical governance genuinely intend to govern responsibly. The difference is infrastructure.

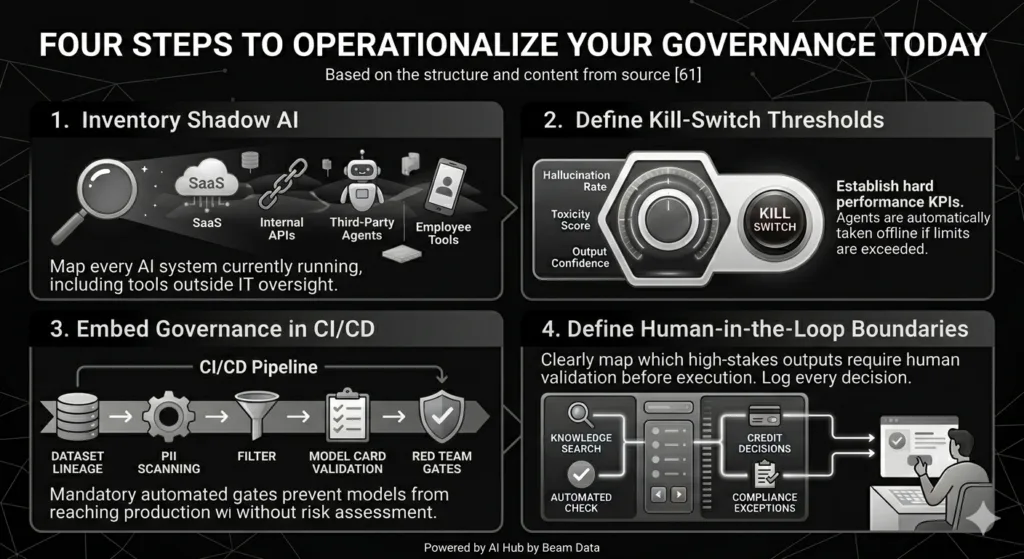

5. Four Steps to Operationalize Your Governance Today

- Inventory shadow AI. Map every AI system currently running in your environment — SaaS tools with embedded AI, internal APIs using LLMs, third-party automation agents, and any employee-deployed tools outside IT oversight. You cannot govern what you cannot see. Shadow AI is not hypothetical: a marketing team using an unsanctioned LLM on customer data, a developer passing sensitive inputs to a public API — these exist in most enterprises today.

- Define kill-switch thresholds. Establish hard performance KPIs for every production AI system: if hallucination rate exceeds 5%, if toxicity score crosses a defined threshold, if output confidence drops below a defined floor — the agent is automatically taken offline. These thresholds should be documented, version-controlled, and enforced by the platform, not dependent on human review catching a problem after the fact.

- Embed governance in CI/CD. Risk assessments should be mandatory automated gates in the software development lifecycle — not post-deployment reviews. Dataset lineage checks, PII scanning, model card validation, and red-team gates should run before any model reaches production. IBM’s governance framework is explicit on this: deployment is controlled through gated approvals in CI/CD pipelines. Organizations that make this change report fewer post-deployment governance issues and faster production timelines.

- Define human-in-the-loop boundaries. Not every AI decision requires human review — but some do, and those boundaries must be explicit, not implicit. Map which high-stakes outputs require human validation before execution: credit decisions, compliance exceptions, security-sensitive configuration changes, patient-facing recommendations. Document the escalation path. Log the decision. The organizations whose governance survived scrutiny at Nexus 2026 built these boundaries into architecture before scaling, not after.

6. How Beam Data AI Hub Closes the Governance Gap

The four steps above are the right sequence. The practical challenge is that executing them across a complex enterprise AI stack — multiple models, multiple vendors, multiple teams — requires a centralized control plane that most organizations do not have.

This is where AI Hub by Beam Data fits in the governance architecture. Rather than treating governance as a separate layer bolted onto an existing AI stack, AI Hub builds the control infrastructure into the platform itself.

Semantic security

AI Hub’s semantic security layer monitors AI interactions in real time for prompt injection attempts, data leakage, and policy violations — not just at the conversational level but at the execution level. When an agent attempts to access data outside its authorized scope, or when an incoming prompt matches a known injection pattern, the system flags and blocks the action before it reaches execution. This is the operational implementation of what theoretical governance describes as ‘appropriate safeguards.’

Centralized audit trails and cost tracking

Every agent action in AI Hub generates an immutable audit log: what data was accessed, which model was invoked, what decision was made, and at what cost. This is not a reporting feature — it is the evidentiary foundation that makes regulatory compliance demonstrable rather than asserted. When an auditor asks for the decision trail on a specific AI-assisted outcome, the answer is retrievable in seconds, not reconstructed over days.

Governance as architecture, not policy

AI Hub by Beam Data moves governance from a document that describes what should happen to an architecture that enforces it. The audit trails, cost controls, kill-switch thresholds, and semantic security controls are not configured after deployment — they are structural properties of the platform.

For enterprises that have been building governance frameworks in policy documents while their AI systems scale in engineering pipelines, this is the shift that closes the gap. Governance that lives in the same infrastructure as the AI it governs is governance that actually works.

7. Governance as a Competitive Advantage

The framing of AI governance as a compliance burden is the wrong mental model, and it leads to the wrong architecture. Organizations that treat governance as a constraint build systems designed to tolerate as little of it as possible. Organizations that treat governance as infrastructure build systems that scale faster because they are trusted.

The evidence supports this. IBM’s governance implementation guide notes that with governance in place, teams can move faster with confidence — not slower. Automated guardrails reduce the manual review overhead that slows deployment. Audit trails reduce the time spent reconstructing incident timelines. Shared ownership between Legal and Engineering reduces the friction that produces bottlenecks.

Governance done operationally is not red tape. It is the infrastructure that lets you deploy the next agent without asking whether the last one is still behaving as intended. That confidence compounds. The enterprises investing in operational governance infrastructure now are not just protecting themselves from the next regulatory deadline — they are building the trust required to scale AI faster than competitors who are still governing by policy document. Ready to move from policy documents to enforceable controls? Explore our Enterprise AI Governance Platform and see how Beam Data AI Hub makes governance operational from day one.

Frequently Asked Questions

Why is theoretical governance no longer sufficient in 2026?

Theoretical governance lacks the technical constraints required to prevent real-time risks in production AI systems. Models drift, agents act, and regulatory obligations are now legally enforceable. The EU AI Act’s August 2026 enforcement deadline for high-risk systems means that governance gaps are no longer just reputational risks — they are legal ones. Static policy documents reviewed quarterly cannot keep pace with systems that evolve daily.

What is the first practical step toward operational governance?

Total visibility. You cannot govern what you cannot see. The first step is inventorying every AI system currently running in your environment — including shadow AI deployed outside IT oversight. This includes SaaS tools with embedded AI, internal APIs using LLMs, third-party automation agents, and any employee-built tools. Without a complete inventory, governance frameworks are addressing a fraction of actual risk.

What is semantic security and why does it matter?

Semantic security is the real-time monitoring layer that detects prompt injection attempts, unauthorized data access, and policy violations at the execution level — not just at the conversational output level. As AI systems move from generating responses to taking actions, language-level guardrails are insufficient. Semantic security extends governance to what agents do, not just what they say.

How does AI Hub by Beam Data improve compliance?

AI Hub provides a single source of truth for audit trails, cost tracking, and security enforcement across all AI workflows. Every agent action generates an immutable log. Kill-switch thresholds are enforced at the platform level. Semantic security blocks prompt injections and unauthorized data access in real time. These capabilities make compliance demonstrable — evidenced by system logs — rather than asserted through policy documents.

Who should own the AI governance process?

AI Enterprise Governance works best when it is owned by a cross-functional team — what practitioners call an AI Fusion Team — spanning Legal, DevOps, and Data. Legal provides regulatory context and defines policy boundaries. DevOps owns the CI/CD pipeline and enforces governance gates at deployment. Data owns model quality monitoring and drift detection. The critical requirement is shared tooling: a centralized platform that translates policies into enforceable technical controls accessible to all three groups.

What is model drift and why is it a governance problem?

Model drift occurs when the statistical distribution of a model’s inputs or outputs shifts materially from its training baseline — typically because the real-world data environment has changed. A model that was accurate and unbiased at deployment may become neither over time without continuous monitoring. Governance frameworks that rely on periodic manual audits will not detect drift until after it has caused real harm. Operational governance addresses this through automated PSI monitoring and predefined performance thresholds that trigger alerts or automatic rollback when drift is detected.

References

Cloud Security Alliance. (2026). Identity and access gaps in the age of autonomous AI: Redefining guardrails vs. governance. https://cloudsecurityalliance.org/research/artifacts/ai-agent-governance-2026/

Dataversity. (2026, January 15). Beyond human-in-the-loop: Why data governance must be a system’s property. https://www.dataversity.net/beyond-human-in-the-loop/

Forbes Technology Council. (2026, March 12). The five-dimension assessment for AI readiness: Why 80% of enterprises are failing at scale. Forbes. https://www.forbes.com/sites/forbestechcouncil/2026/03/12/ai-readiness-assessment/

IBM. (2026). AI governance implementation guide: From ethics to operations. IBM Training and Strategy. https://www.ibm.com/reports/governance-implementation-2026

Ivanti. (2026). 2026 state of cybersecurity report: Navigating the AI-driven threat landscape.https://www.ivanti.com/resources/v/doc/state-of-cybersecurity-2026Ponemon Institute. Managing risks and optimizing the value of AI, GenAI, and agentic AI: A global study. Sponsored by OpenText.https://www.ponemon.org/research/managing-ai-risk-2026