Key Takeaways

- Over 30 AI governance tools now compete for enterprise budgets — and the most common shortlist mistake is mixing two fundamentally different product categories.

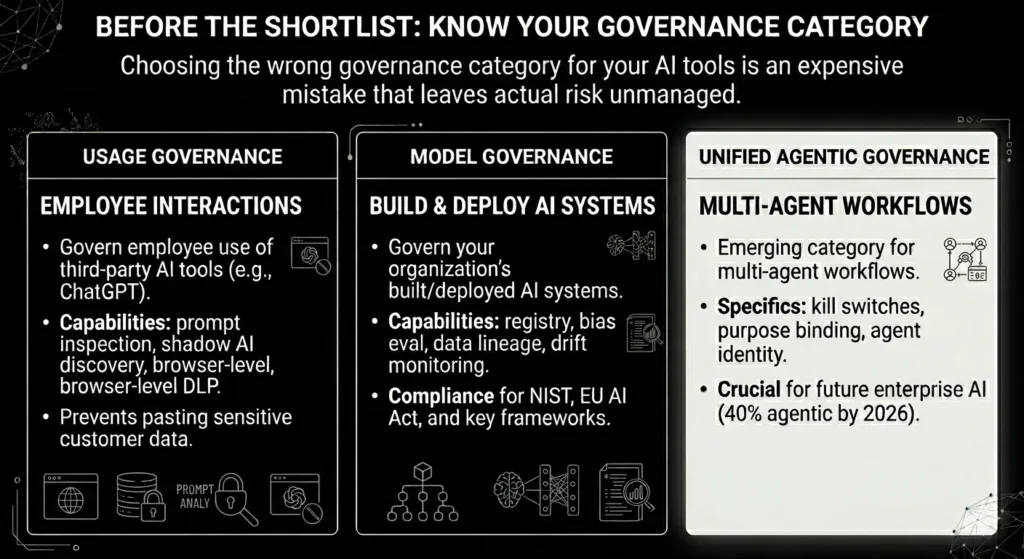

- The market divides cleanly into usage governance governing employees using third-party AI and model governance governing AI systems you build. A third emerging category — unified agentic governance — covers both plus multi-agent workflow controls.

- Only 6% of enterprises have advanced AI security strategies despite 40% embedding AI agents by end of 2026. The governance gap and the deployment pace are moving in opposite directions.

- EU AI Act fines of up to €35 million or 7% of global turnover take full effect for high-risk systems in August 2026. Compliance documentation gaps are now legal liabilities, not just audit findings.

- AI Hub by Beam Data is the only platform in this shortlist that natively addresses all three governance layers — usage, model, and agentic — with on-premise deployment and semantic security built in.

1. The Shortlist Problem

You have read enough about why AI governance matters. The EU AI Act, the Deloitte 2026 report, the Gartner predictions — they all point the same direction. What most of those sources do not give you is a practical answer to the question you are actually trying to answer: which tool do I put on my shortlist, and how do I choose between them?

This guide is written for the reader who has already decided to act. It cuts through a market of 30-plus tools with a structured evaluation framework, a categorized shortlist of the platforms that matter, and a clear explanation of where AI Hub by Beam Data fits — so you can arrive at a decision, not just more research.

By the end of this guide, you will have a working framework for selecting an AI governance platform that matches your actual risk profile — not just the one with the most polished demo.

One important starting point: the AI governance market has a category problem that trips up most buyers before they even reach the shortlist.

2. Category

2.1. Before the Shortlist: Know Your Governance Category

The most expensive shortlist mistake is evaluating tools from the wrong category. There are three distinct types of AI governance platform, and choosing the wrong one means spending budget on a solution that addresses a risk you do not have while leaving your actual risk unmanaged.

Usage governance

These tools govern how your employees interact with third-party AI tools — ChatGPT, Microsoft Copilot, Claude, Gemini. Their core capabilities are real-time prompt inspection, shadow AI discovery, data leakage prevention at the browser level, and policy enforcement. If your primary AI risk is employees pasting sensitive customer data into an unsanctioned AI tool, this is your category. Leading example: Strac.

Model governance

These tools govern AI systems your organization builds and deploys. Their core capabilities are model registry, bias evaluation, data lineage, model cards, drift monitoring, and compliance documentation for frameworks like NIST AI RMF and the EU AI Act. If your primary risk is models reaching production without adequate documentation or monitoring, this is your category. Leading examples: IBM watsonx.governance, Credo AI.

Unified agentic governance

The emerging third category governs all of the above plus the specific risks of multi-agent AI workflows: kill switches, purpose binding, agent identity management, cross-workflow audit trails, and semantic security. As 40% of enterprise applications embed AI agents by end of 2026,up from under 5% in 2025 — this category is moving from optional to essential. AI Hub by Beam Data operates here,

The decision rule: if your risk is employees and third-party tools, start with usage governance. If your risk is internal model deployment, start with model governance. If you are deploying multi-agent workflows and need both plus agentic controls, you need a unified platform — and buying point solutions in the first two categories will not get you there.

3. Criteria

3.1. The 5 Evaluation Criteria That Actually Matter

Enterprise AI governance platforms are evaluated on dozens of features in most buyer guides. Most of those features are noise. The five below are the procurement gates that determine whether a platform can actually govern your AI environment in 2026 — not just in a demo.

Does it govern every AI type in your current environment: usage tools, deployed models, and autonomous agents? A platform that handles two of the three creates a governance blind spot on the third. Assess against your full AI inventory — including shadow AI, which enterprise monitoring consistently finds running at two to three times the number of tools IT believes are deployed. These screening questions become even more important in AI governance platforms for regulated industries where security is important.

1. Compliance mapping

Does it map natively to NIST AI RMF and the EU AI Act without requiring custom configuration work? The August 2026 enforcement deadline for high-risk systems is fixed. Platforms that require your team to build compliance mappings manually add ongoing maintenance cost that compounds as regulations evolve.

2. Integration depth

Does it connect bidirectionally to your SIEM, IAM, DLP, and GRC platforms? A governance tool that cannot write to your security infrastructure is a silo, not a control plane. Specifically assess Model Context Protocol (MCP) support — MCP compatibility determines whether the platform can govern agentic data access dynamically as your AI environment changes.

3.Agentic AI controls

Does it govern autonomous agents specifically? Singapore’s 2026 Model AI Governance Framework for Agentic AI defines four required dimensions: risk assessment by autonomy level, clear human accountability chains, technical controls including kill switches and purpose binding, and end-user responsibility guidelines. With only 6% of enterprises having advanced AI security strategies despite near-universal agentic roadmaps, this criterion is the most under-evaluated on most shortlists for finding model governance platforms for enterprise ai.

4.Deployment model

Can it be deployed on-premise or in a private VPC? For financial services, healthcare, government, and any organization with data residency requirements, this is a procurement gate — not a preference. A platform architectured for cloud-first deployment cannot satisfy these requirements through configuration.

4. The 2026 Shortlist

Strac — Best for Usage Governance

Strac is the strongest option for the large majority of enterprises whose primary AI risk is employees using third-party tools. This, AI governance platforms vendors, uses its browser-native prompt DLP, shadow AI discovery, and MCP DLP support are the most complete in the usage governance category. The relevant limitation: Strac is not a model governance platform. Organizations training or fine-tuning their own models will need to pair it with a model governance tool or move to a unified platform as their AI program matures.

IBM watsonx.governance — Best for Model Governance in IBM Environments

The most mature model governance platform available. Strong NIST AI RMF and EU AI Act mapping, a comprehensive model registry, AI bill of materials, and bias evaluation pipelines. The limitation most buyers encounter: it does not govern how employees use ChatGPT or Copilot, and it is priced and architected for organizations already operating within the IBM ecosystem. For organizations outside that context, the total cost of ownership is typically higher than the capability justifies.

Credo AI — Best for Compliance-First Model Governance

Purpose-built for organizations navigating multiple regulatory frameworks simultaneously. Comprehensive AI risk management workflows with strong documentation tooling. Better suited to compliance preparation and audit readiness than to real-time operational controls. Pairs well with usage governance tools for organizations that need both risk types addressed.

AI Hub by Beam Data — Best for Unified Agentic Governance

The only platform in this shortlist purpose-built for enterprises deploying multi-agent AI workflows on enterprise data. AI Hub governs across all three layers — usage, model, and agentic — from a single control plane, without requiring separate tools or custom integration work between them.

The differentiators that matter at the procurement stage: semantic security for real-time prompt injection detection and unauthorized data access blocking; immutable audit trails per agent action; kill-switch thresholds configurable per workflow; on-premise and private VPC deployment as a native capability; MCP-native integration for governed agentic data access; and industry-specific agents for Manufacturing, Finance, and EdTech that embed governance logic into the domain layer.

AI Hub is the only platform in this shortlist that answers “yes” across all five evaluation criteria above. For enterprises operating or planning multi-agent workflows, the alternatives require combining multiple point solutions — each of which introduces integration overhead, this governance platform gaps at the seams, and additional maintenance cost.

5. Platform Comparison at a Glance

| Criterion | Strac | IBM watsonx | Credo AI | AI Hub (Beam Data) |

| Usage governance | Yes | No | Partial | Yes |

| Model governance | Partial | Yes | Yes | Yes |

| Agentic AI controls | No | Partial | Partial | Yes |

| EU AI Act mapping | Partial | Yes | Yes | Yes |

| On-prem / VPC deploy | No | Yes | Partial | Yes |

| MCP support | Partial | Partial | No | Yes |

| Semantic security | Partial | No | No | Yes |

Note: “Partial” indicates the capability exists but requires additional configuration, third-party integration, or applies only to a subset of the use case described. AI Hub by Beam Data is the only platform in this comparison with native “Yes” across all seven criteria.

6.The Build vs. Buy Decision

Before committing to any platform, most enterprise AI teams face the build-versus-buy question. The honest answer depends on one variable more than any other: whether you have a dedicated AI platform engineering team with the capacity to build and maintain a governance layer over three years — not just over three months.

Custom-built governance layers are viable when the workflow is genuinely proprietary and the engineering investment is sustainable. In practice, the three-year cost of maintaining a custom layer through API changes, evolving regulatory requirements, model failover, and security updates is consistently underestimated. Organizations that start with a custom approach and later migrate to a platform report that the migration cost exceeds what the platform would have cost from the start.

The data supports the platform economics: organizations with unified governance platforms experience 23% fewer AI-related incidents and bring new AI capabilities to market 31% faster than those managing governance through custom or point-solution approaches (Elevate, 2026). The speed advantage is the part most buyers do not anticipate — governance infrastructure that is already built does not slow deployment, it enables it.

7. Why AI Hub by Beam Data Belongs on Every Agentic Enterprise Shortlist

The three blogs that preceded this one in this series built toward a specific architectural argument: enterprise AI governance fails not because organizations lack policy intent, but because they lack the infrastructure to make that intent enforceable. The data foundation is fragmented. The orchestration layer has no governance controls. The agents act without audit trails.

AI Hub by Beam Data was designed for exactly this problem. It is not a governance tool added to an AI platform. It is a governed platform from the foundation up — where the audit trails, kill-switch controls, semantic security, and compliance documentation are structural properties of the architecture, not configuration options applied after deployment.

For buyers reaching this point in their evaluation, the relevant question is not whether AI Hub covers the criteria in Section III — the comparison table above answers that. The relevant question is deployment path. AI Hub follows the 90-day agentic strategy introduced in the second post of this series: Trigger, Execute, Verify, Log. Governance is embedded in each phase, not added at the end of the rollout.

Organizations deploying multi-agent workflows without a unified governance platform face three compounding risks that are already materializing in 2026: regulatory exposure as EU AI Act enforcement tightens, shadow agent proliferation as teams build outside IT oversight, and audit failure when the evidentiary trail for agent decisions does not exist. These are not hypothetical risks. They are documented outcomes that AI Hub is specifically architected to prevent.

Conclusion: Making the Decision

The shortlist problem has a straightforward resolution once you know which governance category matches your actual risk profile. Usage governance, model governance, and unified agentic governance are distinct products solving distinct problems. The enterprise that needs all three — and in 2026, any organization deploying multi-agent workflows needs all three — cannot solve it with point solutions without creating governance gaps at the integration seams.

The evaluation criteria in Section III are the five questions to put to every vendor on your shortlist. The comparison table in Section V is the starting point for that process. The build-versus-buy framing in Section VI is the decision that determines whether you are buying a platform or committing to building and maintaining one.

Ready to see how AI Hub handles governance across your data, orchestration, and agent layers in practice? Schedule a 30-minute demo with the Beam Data team.

Frequently Asked Questions

Q1. What is the difference between AI usage governance and AI model governance?

AI usage governance controls how employees interact with third-party AI tools — preventing sensitive data from being shared with ChatGPT, Copilot, or other external systems. AI model governance controls AI systems your organization builds and deploys — managing model risk, bias evaluation, documentation, and compliance throughout the model lifecycle. Most enterprises need one primarily; organizations deploying multi-agent workflows need both, plus agentic-specific controls.

Q2. Which AI governance tools are best for EU AI Act compliance?

IBM watsonx.governance and Credo AI have the strongest out-of-the-box EU AI Act mapping for model governance. AI Hub by Beam Data covers EU AI Act requirements across usage, model, and agentic governance from a single platform, with the additional advantage of on-premise deployment for organizations with data sovereignty requirements. The August 2026 enforcement deadline for high-risk systems means compliance documentation should already be in progress.

Q3. Do I need a separate tool to govern AI agents vs. AI models?

With most platforms, yes — and that is the gap this guide is designed to surface. Traditional model governance tools were not built for the specific risks of autonomous agents: kill switches, purpose binding, agent identity management, and cross-workflow audit trails. A platform like AI Hub by Beam Data governs both in the same control plane. Organizations that address model governance and agentic governance separately typically end up with a gap at the integration seam between the two tools.

Q4. What is semantic security in AI governance?

Semantic security is real-time monitoring that detects prompt injection attempts, unauthorized data access, and policy violations at the execution level — not just at the conversational output level. As AI agents move from generating responses to taking actions querying databases, modifying records, triggering workflows, language-level content filters are insufficient. Semantic security extends governance to what agents do, not just what they say. AI Hub by Beam Data implements semantic security as a native architectural component.

Q5. How does AI Hub by Beam Data compare to IBM watsonx.governance?

IBM watsonx.governance is the most mature model governance platform for organizations in the IBM ecosystem — strong on EU AI Act documentation, model registry, and bias evaluation. It does not govern employee use of third-party AI tools, does not provide agentic AI controls, and prices its offering for IBM-embedded deployments. AI Hub by Beam Data covers all three governance layers in a single platform, with on-premise/VPC deployment, semantic security, and MCP-native integration. The practical difference: watsonx.governance governs models in production; AI Hub governs the entire enterprise AI architecture.

Q6 What should I look for in an AI governance platform for on-premise deployment?

First, confirm that on-premise is an architectural design principle — not a deployment configuration. Platforms built cloud-first and adapted for on-premise often have feature gaps or require additional infrastructure. Second, assess whether the full governance capability set audit trails, semantic security, kill-switch controls, compliance reporting is available in the on-premise deployment, not just a subset. Third, evaluate the upgrade and maintenance path: who manages model updates, security patches, and regulatory framework updates when the platform runs in your environment? Beam Data designed AI Hub with on-premise and private VPC as native deployment options, delivering full capability parity across both.